Straight from the Desk

Syz the moment

Live feeds, charts, breaking stories, all day long.

- All

- equities

- United States

- Macroeconomics

- Food for Thoughts

- markets

- bitcoin

- Central banks

- geopolitics

- Fixed Income

- gold

- europe

- Asia

- Commodities

- AI

- investing

- Technology

- Crypto

- technical analysis

- nvidia

- china

- ETF

- earnings

- oil

- Forex

- energy

- banking

- magnificent-7

- Real Estate

- Volatility

- Alternatives

- apple

- emerging-markets

- switzerland

- tesla

- Middle East

- United Kingdom

- amazon

- assetmanagement

- microsoft

- ethereum

- russia

- meta

- Industrial-production

- ESG

- Healthcare

- Global Markets Outlook

- bankruptcy

- Turkey

- brics

- Market Outlook

- africa

- performance

The "Sell First, Ask Questions Later" Era is officially here.

Markets are panicking over AI replacing jobs and businesses—a trend called AI Displacement Anxiety. Investors aren’t just worried about current earnings; they’re fearing future obsolescence from AI that doesn’t even exist yet. Example: Commercial real estate giants ($CBRE, $JLL, $CWK) saw shares drop 15%+ because of AI fears, not actual competition.

The "Old Guard" of Finance is officially on notice.

For years, complex tax planning was a key competitive advantage for expensive firms because it was slow, manual, and mentally demanding. Altruist has disrupted this model with Hazel AI, which can instantly analyze documents like tax returns, pay stubs, and meeting notes to generate personalized strategies in real time—turning days of back-office work into minutes. It enables advanced “what-if” scenario modeling for bonuses, home sales, retirement, and lifestyle changes through interactive dashboards, while addressing security concerns with zero-data-retention policies. At just $60 per month, it democratizes sophisticated tax optimization tools previously reserved for the ultra-wealthy, shifting power toward independent advisors. The message is clear: simply understanding tax returns is no longer enough—advisors who leverage AI to enhance insights and strengthen client relationships will be the ones who thrive. Source : Davis Janowski

The AI action is in Asia

See below chart with SK Hynix, Samsung or Advantest all sky rocketing. Meanwhile, Nvidia $NVDA (below line in yellow) remains stuck Source: LESG, TME

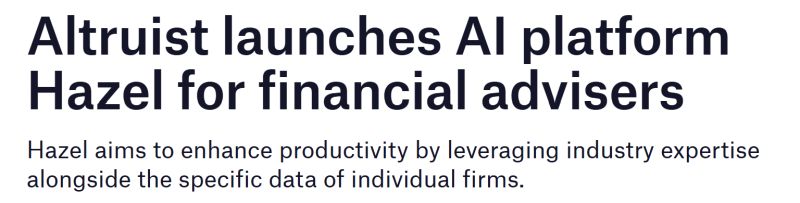

"A pair trade for the AI transition: long API / short slides"

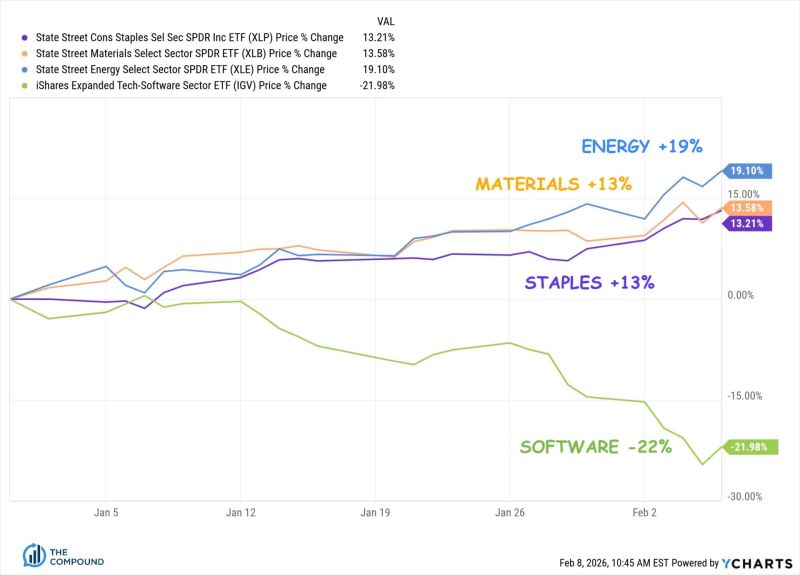

🚨 The "Software is Dead" Narrative is Wrong. You’re Just Looking at the Wrong Software. The market is panicking. The $IGV hashtag#etf is down 30%. The headlines say AI is writing code now, so software companies are toast. 📉 They’re making a massive Category Error. If you're investing without looking at the "plumbing," you're missing the biggest bifurcation of the decade. Here is how the "Singularity" is actually playing out: 1. The Victim: Human-UI SaaS (Type 1) 🖱️ If your software requires a human to stare at a dashboard for 8 hours, you have a target on your back. The Logic: AI agents replace humans. One less Customer Service rep = one less Zendesk seat. One less PM = one less Monday.com seat. The Result: Seat-based SaaS compresses as headcount shrinks. 2. The Winner: Bot-Infrastructure (Type 2) 🤖 AI agents don't have eyes. They use APIs. They don't click; they call. The Logic: One human generates a few clicks an hour. One AI agent generates thousands of API calls per minute. The Winners: The "Tollbooth Operators"—Okta, MongoDB, Snowflake, Datadog. They don't care if the user is a human or a bot; they charge per unit of consumption. Bots consume orders of magnitude more than we do. 🪦 The Real Casualty: The "Body Shops" The IT outsourcing model (Infosys, Wipro, Cognizant) is built on Labor Arbitrage. Hire for $15/hr in Bangalore, bill for $80/hr in NYC. The Problem: AI makes labor arbitrage worthless. You can’t get cheaper than "nearly free." The Proof: India's Big 4 are already cutting thousands of heads. The hiring machine has stopped. 🛑 The Bottom Line: The market is selling "Technology" as a monolith. This is a mistake. AI replaces Road Workers (IT services/Human-UI). AI pays Tolls (Infrastructure/APIs). The Play: Buy the dip in APIs. Short the slides. The infrastructure layer is the only place to hide when the bots take over.

The Most Important Investing Theme of 2026 is HALO

HALO stands for Heavy Assets, Low Obsolescence. These are undistruptible companies from an AI standpoint. There’s nothing Sundar Pichai and Sam Altman can take from them Source: Ritholtz

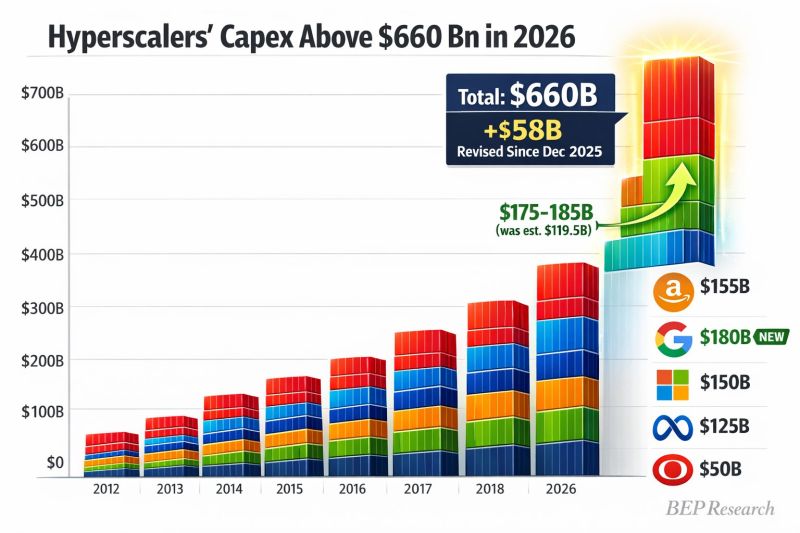

Hyperscaler capex just got revised UP $58B to $660B for 2026

Alphabet dropped a BOMB: $175-185B guidance vs $119.5B estimate That's +$55B from Google alone The AI infrastructure arms race just went nuclear ☢️ Source: Ben Pouladian @benitoz

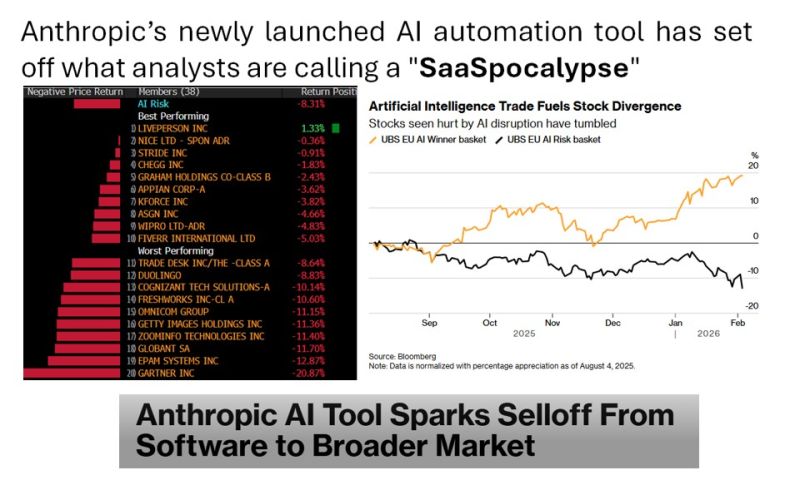

Anthropic’s newly launched AI automation tool has set off what analysts are calling a "SaaSpocalypse", rattling global technology markets

Anthropic recently released 11 new plug-ins for its Claude Cowork agent, an agentic, no-code AI assistant designed for enterprise users. The tool is aimed at automating tasks across legal, sales, marketing and data analysis functions Vishwa Sharan @vmsharan_ "Anthropic latest AI tool can automate tasks in legal, sales, marketing, and data analysis. Routine work such as document review, compliance tracking, risk flagging, and data processing. It essentially targets professionals in service automation. Expect reduced demand for consulting engagements, lesser billing hours. IT firms may see slower headcount growth or reductions as AI handles clerical work, shifting focus to higher level AI oversight and innovation" Source: Bloomberg

Investing with intelligence

Our latest research, commentary and market outlooks